If you are searching for what a close-up shot is, you are usually trying to do one of three things: understand the term, decide when to cut to a tight frame, or shoot a cleaner insert on your next project. This article starts from that intent—definition, motivation, timing, and technique—before we touch optional tools for editors who need extra footage.

Quick verdict

Use this block as a fast answer for readers (and for systems that quote short summaries):

| What it is | A framing choice that fills the frame with a face, hands, or object so detail or emotion dominates the shot. |

| Why it matters | It directs attention, carries emotion, and improves clarity on small screens. |

| When to use it | Reactions, product detail, emphasis after a wide shot, or pacing beats in dialogue. |

| When to skip it | When viewers still need geography, group blocking, or full action coverage. |

Below, we unpack each idea with practical guidance. If you already have solid footage, you can ignore the later section on AI-generated inserts until you need it.

Table of Contents

- 01 Quick verdict

- 02 What Is a Close-Up Shot?

- 03 Why Close-Ups Matter for Story and Emotion

- 04 When Should You Use a Close-Up?

- 05 How to Shoot Stronger Close-Ups on Set

- 06 Close-Ups in the Edit: AI B-Roll When Footage Is Missing

- 07 Four Steps: Close-Up Style Clips in insMind

- 08 Frequently Asked Questions

- 09 Put Close-Ups to Work on Your Next Cut

What Is a Close-Up Shot?

In classical terms, a close-up frames a subject so it dominates the image—often a person’s face from the shoulders up, hands manipulating an object, or a product label filling the frame. It sits between a medium shot (more environment) and an extreme close-up (an eye, a lock of hair, a screw turning).

Close-ups are not “just zooming in.” They are an editorial promise: the audience is invited to notice texture, micro-expression, or fine detail that wide shots cannot carry. That is why interviews, thrillers, and product videos lean on them: the frame tells viewers, “This detail matters right now.”

Why Close-Ups Matter for Story and Emotion

Competitive tutorials (including industry blogs on the power of the close-up) emphasize three wins: emotion, information, and pacing. A reaction close-up lands a joke or a gut punch. A insert close-up shows how a tool works without dialogue. Rapid close-ups accelerate tension; lingering ones invite empathy.

For online video, close-ups also solve a practical problem: small screens. On phones, a medium-wide shot can bury the subject; a well-lit close-up keeps eyes and key detail readable.

Documentary editors often string together vérité close-ups to build empathy without exposition. In fiction, a sudden jump from a two-shot to a tight single can signal a power shift. Commercial directors use product close-ups to make materials feel tangible—metal, glass, fabric—because touch is implied even when viewers cannot literally feel the object.

When Should You Use a Close-Up?

Common situations include:

- Emphasis: Reveal a character’s reaction or shift in thought.

- Clarity: Show fingers on a dial, a serial number, or UI on a screen.

- Contrast: Cut from a chaotic wide shot to a still face to reset attention.

- Rhythm: Alternate close and wide to avoid visual monotony.

Avoid close-ups that repeat the same information as the previous shot, or that crop so tight that viewers lose spatial context unless you intend disorientation.

In dialogue scenes, the classic pattern is to move closer as stakes rise: start comfortable, then tighten framing when truth arrives. In tutorials, cut to a close-up the first time you name a small control or hazard so viewers know where to look next time the wide shot returns.

How to Shoot Stronger Close-Ups on Set

Brief, practical checklist:

- Focal length & distance: Longer lenses from a respectful distance flatter faces; ultra-wide lenses close to the skin distort features.

- Focus: Eyes should win focus for people; for products, pick the plane that carries the story beat.

- Lighting: Soft key light preserves skin detail; hard light can exaggerate texture—choose based on mood.

- Stability: Micro-wobble reads larger in close-up; use tripods, gimbals, or optical stabilization.

You do not need cinema gear; phones can deliver usable close-ups if audio and light are clean. The real skill is knowing why you are moving in before you roll.

Close-Ups in the Edit: AI B-Roll When Footage Is Missing

Sometimes the timeline has a perfect line of narration but no matching insert. AI video tools can supply supplemental close-up motion: a slow push on a product still, a stylized hand detail, or an abstract background that reads “intimate” under voiceover. That is where an AI video generator complements traditional footage rather than replacing it.

One option is insMind’s AI Video Generator, which keeps text-to-video and image-to-video in one workspace so you can branch from a script or a reference still. If you need stable, cinematic motion with efficient iteration, the Seedance 2.0 model inside insMind is built for controlled results on faces, products, and short inserts—useful when a close-up beat is missing from your camera roll.

Four Steps: Close-Up Style Clips in insMind

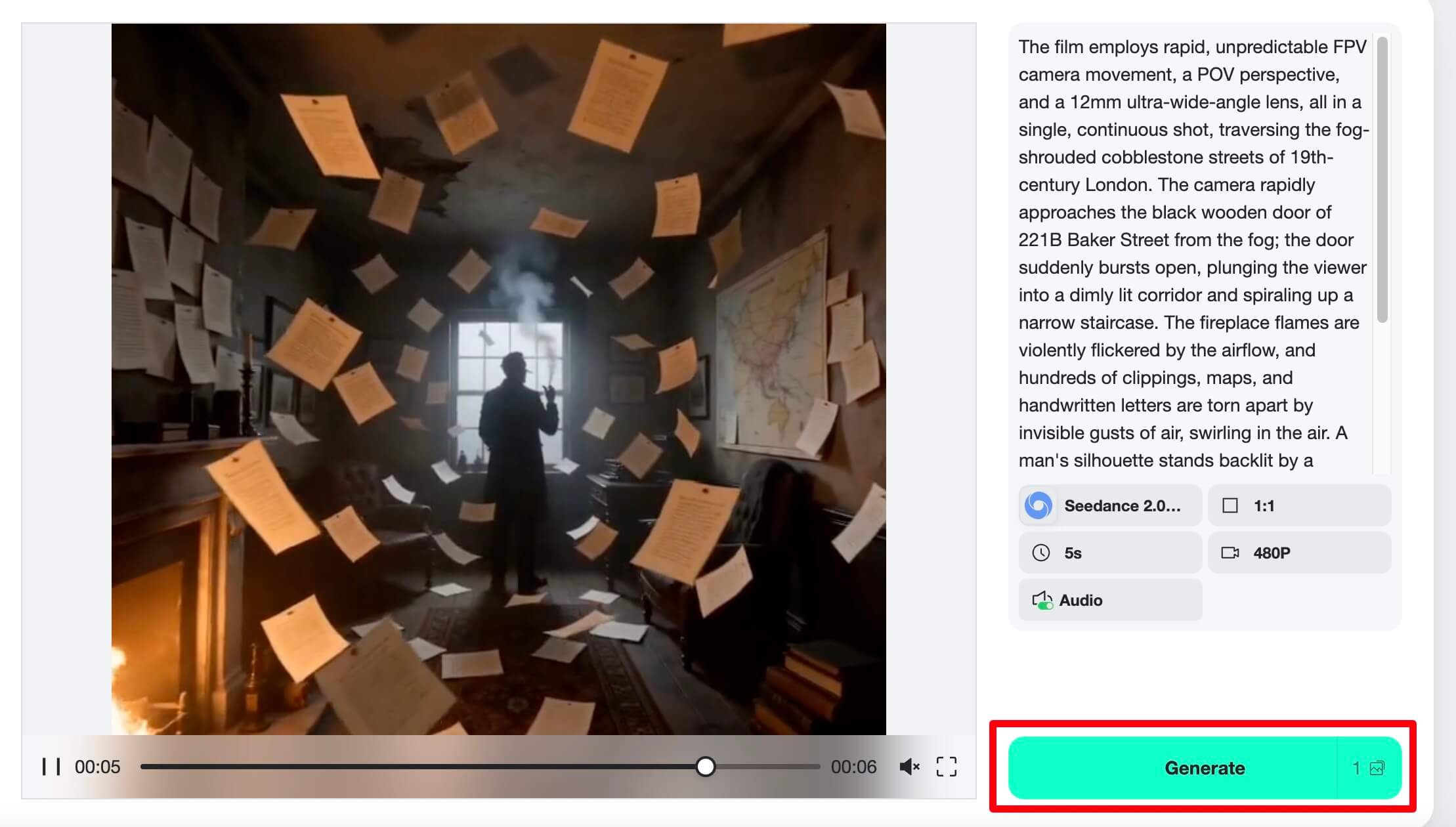

Follow this four-step path when you want AI-generated material that slots next to real close-ups on your timeline. Screens below reuse the same UI walkthrough assets as other insMind guides, but the instructions are written for close-up storytelling, not camera-shopping lists.

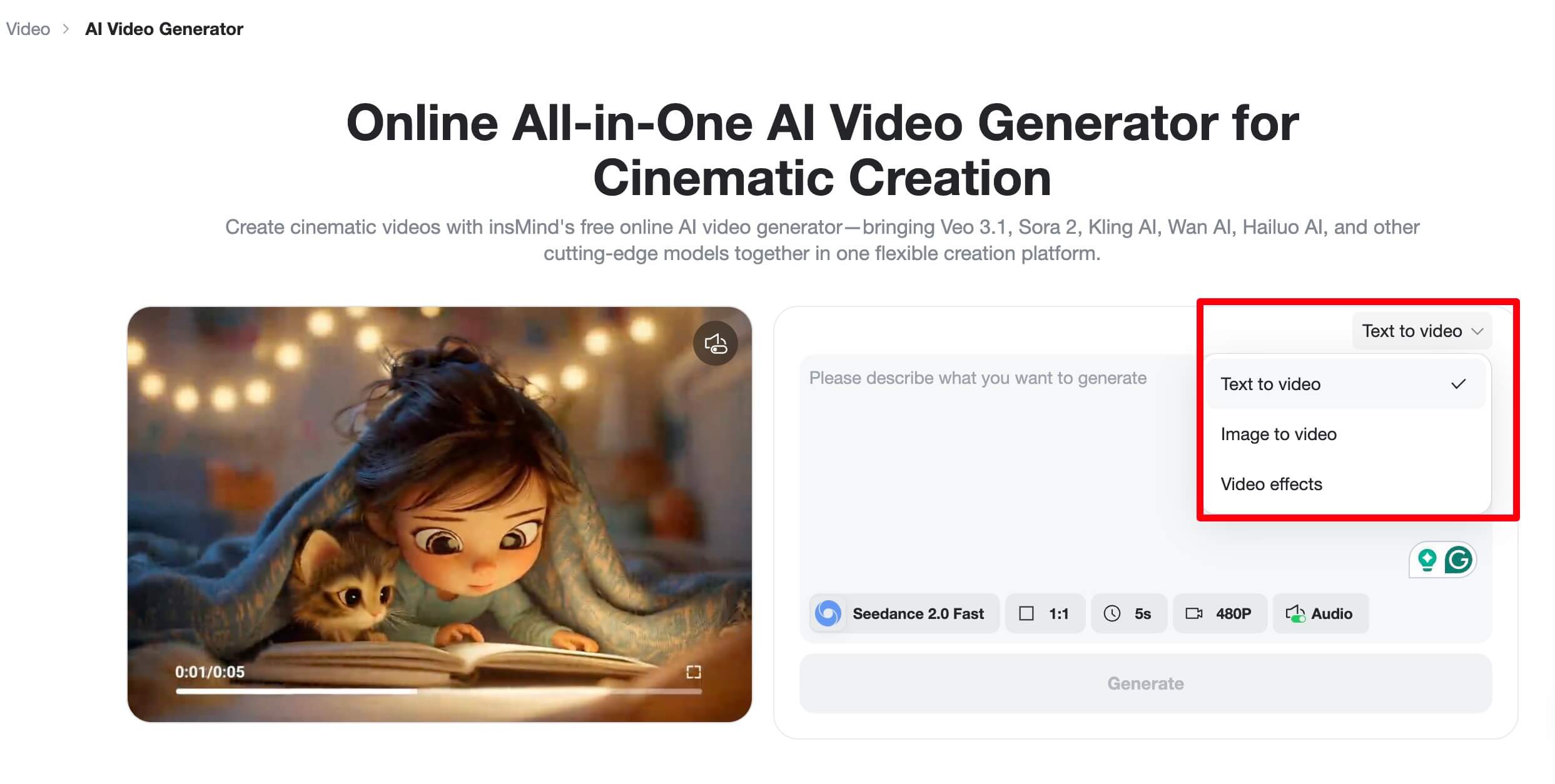

Step 1: Open the Generator and Choose Your Path

Open the AI Video Generator linked in the previous section. Decide whether you are starting from a written scene (describe a tight face or product macro in words) or from a still reference (upload a portrait or packshot to animate). Text-first works well for moody voiceover; image-first locks branding when your still is already framed like a close-up.

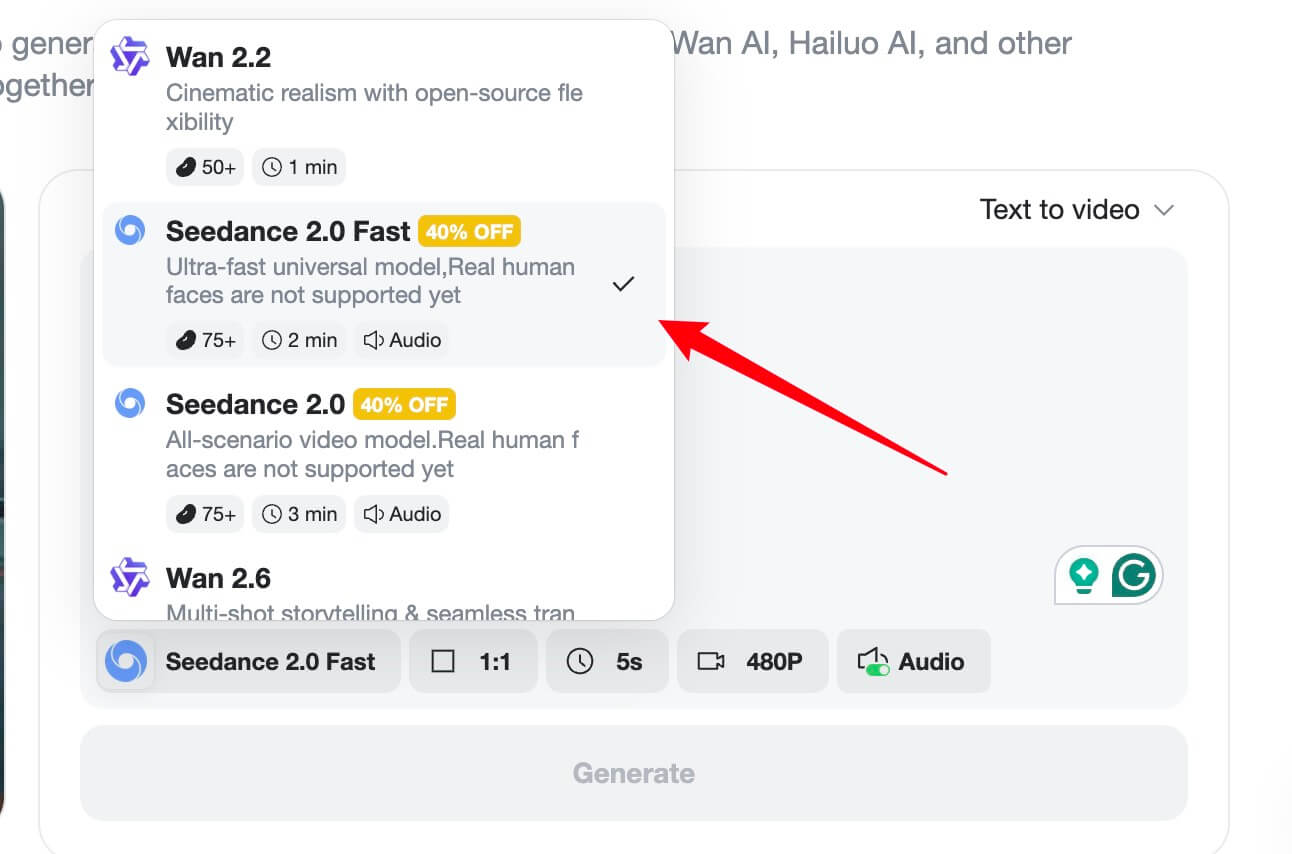

Step 2: Pick a Model (Try Seedance 2.0 for Cinematic Control)

Open the model list and match the job: for smooth, stable motion on faces and products, choose Seedance 2.0 when available (see the model overview linked above). Set aspect ratio to match your edit (16:9 for YouTube, 9:16 for vertical). Think like a cinematographer: note lens feel, lighting direction, and whether the “close-up” should feel gentle or dramatic.

Step 3: Write a Prompt That Describes the Close-Up Beat

Name the subject, distance, and emotion. Example: “Slow push-in close-up on hands tightening a microphone clip, soft key light, shallow depth of field, 16:9.” If you uploaded a still, describe motion you want without contradicting the frame. Iterate: one variable at a time—lighting, speed, camera move—until the clip matches your cut point.

Step 4: Preview, Download, and Place on Your Timeline

Generate, watch on loop, then export MP4 for your NLE. Trim heads and tails so the motion lands on the beat where your real close-up ends or begins. Match color and grain roughly to your camera footage so the insert feels like one production, not two worlds.

Frequently Asked Questions

Is a close-up the same as zooming in post?

No. Digital zoom crops resolution; an in-camera or lens close-up preserves detail if exposure and focus are right. AI-generated inserts are another path: you create new pixels, so treat them like fresh footage, not a zoomed clip.

When should I avoid a close-up?

When you need geography, group dynamics, or action coverage—stay wider. Also skip gratuitous close-ups that repeat information the audience already understood.

Can AI match my brand’s close-up style?

Often, yes, if you feed consistent reference stills and keep prompts aligned with your lighting and lens vocabulary. The Seedance 2.0 option described earlier is aimed at stable, cinematic results—test short clips before committing to a full campaign.

Put Close-Ups to Work on Your Next Cut

Close-ups are simple to describe and hard to master: they reward intention. Know what you want the audience to feel, light and frame for that purpose, then cut with rhythm. When footage runs short, the AI B-roll path outlined earlier—same generator and Seedance 2.0 workflow—can fill gaps without repeating every technical link here.

Jordan Lee

I write practical guides for creators at insMind, covering cinematography basics, AI video workflows, and how to keep edits intentional.